Falco Feeds extends the power of Falco by giving open source-focused companies access to expert-written rules that are continuously updated as new threats are discovered.

Security and artificial intelligence have been a winning combination for years. Now, the boom of generative AI has made security accessible to more people.

We’ve already covered how AI is a broad discipline that has been around since the 1960’s, and we’ve also provided you with tips to secure your AI workloads.

In this article, we’ll discuss the other side of the coin, presenting several examples of how AI is already being used to secure cloud workloads. We'll also cover briefly how cybercriminals may be using AI.

Strengths of AI for security

AI is essentially computing based on statistics and probability.

As such, it excels in fuzzy logic and pattern recognition, where inputs are not clearly defined, and even slight variations can throw off traditional computing. This resilience to variations allows AI to adapt to scenarios it’s never seen before. Also, the pattern recognition makes AI good at correlating data from several sources.

Applied to security, AI is good at tasks like flagging abnormal behavior, then reading the tags of the affected workload, and semantically discerning whether it's a production or development workload to help you decide how critical that security event is.

AI and security go back a long time

AI is a proven technology for security.

Computer vision is used in security cameras to differentiate intruders from pets. It’s also used in our phones' fingerprint sensors and facial recognition.

Spam filters have always been based on statistics. First, they used Bayesian probability; they use neural networks and machine learning. Similarly, banks currently use machine learning to flag fraudulent activity.

The same techniques are used for runtime profiling to identify the expected processes that should be running in a container and to flag anything unexpected.

Disclaimer: Some of these techniques were considered artificial intelligence at first, but over time, we (humans) keep raising the bar for what counts as AI.

Generative AI is making security more accessible

Up until now, you needed to be a security expert to protect your infrastructure.

Let’s take the role of a security engineer who wants to reduce the attack surface of their infrastructure by remediating some vulnerabilities.

Security tools do a good job at prioritizing the most critical vulnerabilities. They know:

- The severity of a vulnerability.

- Whether the affected resource is in use or not.

- If there’s a fix available.

However, you need deep technical expertise to understand the vulnerability's real implications, navigate the internet to find remediation steps, and then implement the fix.

Using LLMs as a software engineer to implement security

LLMs have made this process more accessible.

Now, the software engineer responsible for the affected resource can handle all this, freeing up time for security engineers to focus on more technical areas.

The software engineer would land in a list of vulnerabilities, and would ask the AI for information on the most critical one:

- Security Engineer: Which workloads are affected by CVE-2025-22871?

- AI: Provides a summary of the workloads with context on the risk exposure. It also includes a link to the full list and a query to obtain the same data in the UI.

- SE: Tell me about the coredns workload.

- AI: Provides context on where the workload is running and on similar workloads.

- SE: Can you explain this query?

- AI: Provides a step-by-step explanation of the query.

- SE: Should I fix this CVE?

- AI: Answers yes after assessing the risk based on exploitability, exposure time, and availability of a fix. It also provides some remediation steps.

It’s a game-changer to be able to ask for explanations and get personalized responses that take into account the information available about your infrastructure. Think about it, the alternative is to search the internet and spend time triaging what information applies to your particular use case.

Using MCPs to gain context during an event investigation

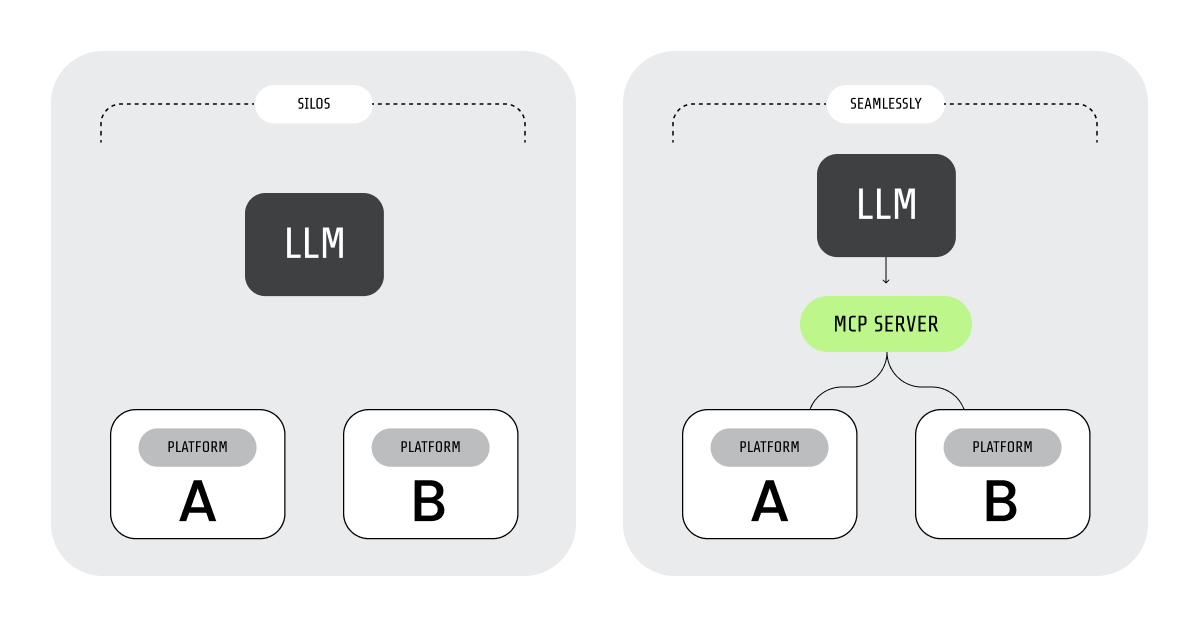

An MCP server serves context about a platform in a format that LLMs can understand. Then, it makes actions available to the LLMs so they can interact with the platform.

We recently showcased how a security engineer could use the GitHub MCP to investigate the source of a security alert. The conversation goes as follows:

- Security Engineer: What are the latest 5 high-severity security events?

- AI: Returns a list of timestamped security events. First one reads: “Unauthorized Write Operations to Critical System Paths;”

- Security Engineer: Focus on the first event. The repository for that code is 'sysdig-articles'. Where in the code is it happening?

- AI: Accurately identifies the file and line causing the problem. Also provides a brief explanation of what the code does: The /etc/hosts file is being edited.

- Security Engineer: Is this a security risk?

- AI: Provides a positive response, with a mostly accurate explanation.

- Security Engineer: I'm running this code in a Kubernetes cluster. Is there a native alternative for this functionality?

- AI: Provides several alternatives and a detailed explanation of how to use ‘hostAliases’.

- Security Engineer: Create an issue in GitHub so that someone can fix this security issue. Assign it to the author of the code. Include: All the information on the security issue, link to the source code causing the issue, and propose a solution for Kubernetes.

- AI: Creates the issue as requested and returns its URL.

Again, it’s game-changing how the AI agent was able to assist our security engineer in identifying the issue and creating a detailed issue for the development team. Without the LLM, the security engineer wouldn’t even consider pausing to understand the problem, which would probably cause extra friction between the two teams.

More use cases using LLMs and MCPs, related to security

We’ve explored several other scenarios in our blog over the last few months:

- Responding to alerts with Falco.

- Find and resolve compliance issues in real time.

- Correlate SCA findings with Runtime Results.

- Fixing security issues pre-deployment.

- Building AWS incident response playbooks.

- Extra: 5 ways AI improves cloud detection and response.

- Automate triage and investigation for operational efficiency (Sysdig MCP + Jira MCP)

- Isolate cloud resources under investigation. (AWS + Sysdig)

- Human-in-the-loop and agentic security responses with Torq and Sysdig

- Generate AWS policy to fix a compliance deviation (Sysdig counterpart for product-agnostic article in planning)

Bad actors are also benefiting from generative AI

We’ve seen how AI is making security more accessible to both security engineers and software engineers.

Sadly, the same applies to cybercriminals.

On the social engineering side, the use of deepfakes is on the rise. They are used to impersonate directives, and also by job candidates to hide their real selves.

There isn’t much evidence of attackers using AI to write malware or to assist them during an attack. After all, they prefer not to reveal their methods. However, the same tools engineers use to automate their tasks are also available to cybercriminals. It would be naive to think they are not leveraging these tools as well.

Attacks to date have been mostly generic and indiscriminate. You are mostly safe unless you have the specific vulnerabilities that the attacker has targeted. However, we may start seeing a more personalized approach in which malware adapts to your particular infrastructure. Current AI technology is still limited, but it continues to evolve at an incredible pace.

Runtime protection is more critical than ever

Expect the unexpected.

We don’t know when AI will be helping cybercriminals discover new zero-day vulnerabilities, and it’s been a long time since you could rely on malware signatures.

Also, AI is not perfect. Relying heavily on AI may leave some unintended security gaps.

You should strengthen your runtime detection, your last line of defense has never been so important.

Noise, an unintended consequence

A consequence of making coding and security more accessible to everyone is that more people are participating in bug bounty programs.

These programs typically reward security researchers who find and report vulnerabilities in software. However, it seems they have been hijacked by greedy reporters who submit nonsense, taking time away from developers that could be used to investigate real issues.

Things got so out of hand that curl has stopped rewarding reports and has tweaked the submission process.

Main challenges of using AI for security

Don’t forget that the current AI technology has several limitations. You should think about it as a complement rather than a replacement.

AI needs guardrails

The way AI succeeds at many tasks is impressive. However, this success rate is not enough in some cases.

Failing 1% when summarizing a text is acceptable; failing so much while driving a car is a tragedy.

In the same way, failing to flag even a single instance of malicious activity may compromise your entire infrastructure. As we stated earlier, runtime protection is increasingly crucial for covering any gaps.

AI can generate too much noise

Speaking of error rates. This is actually a metric you can often tweak; however, it’s a balancing act between accuracy and noise. If you set your AI to alert on anything suspicious, you’ll get all the important security events, but you are also risking drowning under a pile of false positives. However, if you instruct your AI to report only when it’s certain, you’ll miss some security events where things are not clear.

AI errors are hard to spot

While traditional computing fails with a clear error, Gen AI prioritizes giving you a response over answering honestly with an error message. Agents will provide these wrong answers with total confidence. Stay skeptical, and double-check their work.

AI cannot be audited

AI models are black boxes, a massive collection of numeric matrices, so you can’t really get inside to see how they work.

To make things work, they are non-deterministic, which makes debugging their behavior challenging.

As a result, if there’s a security flaw or if a model is poisoned, you won’t detect it until it’s too late.

In summary

AI and security have been a winning combination for decades.

Now, generative AI is making the world of security accessible to more people.

Like any emerging technology, AI is not a silver bullet, but you will succeed if you leverage its strengths and mitigate its limitations.